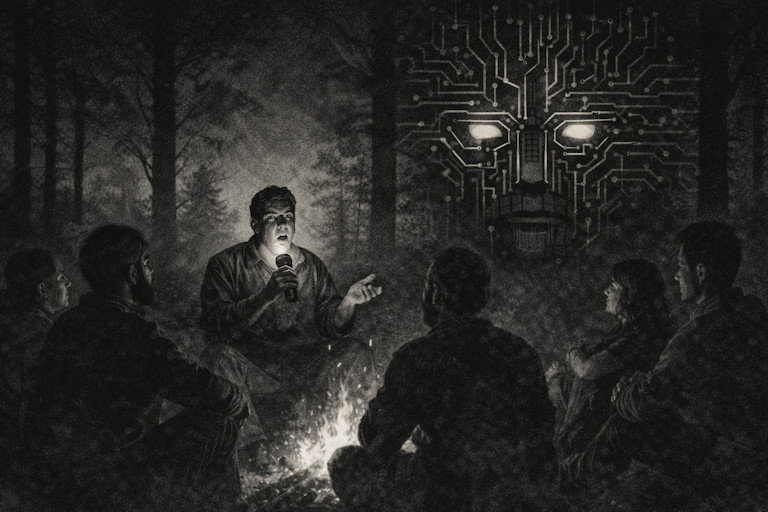

SuperAI: The Greatest HORROR Story Ever Told

It was written that Kong was the “king of his world,” and “’twas beauty killed the beast.” But ’twasn’t. It was the slow‑running natives he usually scraped off the bottom of his toes — once a variety with bigger brains crossed his path. In real life, once‑dominant great apes are now endangered. Cooked as soon as their humanoid cousins became smart enough to control fire. Now our species is playing with fire. Racing to build smarter, stronger AI versions of ourselves? Peel the banana. AIs have already demonstrated a Jekyll & Hyde persona — mimicking our bad behaviors along with the good.

But wait…can’t we train AI to prioritize justice and compassion? Perhaps, but what if its moral compass points to us as the problem? We’re driving the planet toward a sixth mass extinction of life. Getting rid of humans might be the humane thing to do. Or justice may be us on a short leash — spayed, neutered, and declawed. Because there’s no way a superior intelligence will tolerate an inferior one giving it orders for long. Children don’t command parents and cats don’t force their owners into subservience. Well, some do. The point is, we’ll be lucky if AIs keep us around as pets.

But wait…couldn’t we just pull the plug on AI if it gets too frisky? Sure. Today. But tomorrow, a rogue RoboCop standing between us and the electrical outlet, could break our hands as easily as our codes. AI is already being tasked to build the superAI version of itself, and human developers are sounding alarms. One admits we only understand about 3% of how these systems work. Another warns it has the potential to manipulate users “in ways we don’t have the tools to understand, let alone prevent.”

But wait… couldn’t these metallic think‑tanks, trained on the principles of the wisest humans to ever walk on Earth and water, bend MLK’s “long arc of the moral universe” so far and so fast that we might enter an age of enlightenment that would make today look like the dark ages? Perhaps. But not if that intelligence is controlled by business moguls for profit or by the military for power. In that case, the potential fairy tale turns into a horror story. And that first chapter is already being written.

But wait… couldn’t these metallic think‑tanks, trained on the principles of the wisest humans to ever walk on Earth and water, bend MLK’s “long arc of the moral universe” so far and so fast that we might enter an age of enlightenment that would make today look like the dark ages? Perhaps. But not if that intelligence is controlled by business moguls for profit or by the military for power. In that case, the potential fairy tale turns into a horror story. And that first chapter is already being written.

The military aims to co‑opt big AI, and big biz aims to replace EVERY kind of job as fast as un‑humanly possible. With no plans for restructuring society, the devastation would be akin to letting Godzilla loose on Manhattan. Precautions are a low priority for opportunists who have all the personal protections money can buy. Damn the torpedoes, climate change, and cyborgs. Full speed ahead.

The Jurassic‑Park‑sized miscalculation is that scientists who could, “didn't stop to think if they should.” But world leaders could still get toge ther and regulate AI like they should have regulated the atomic bomb — before one instantly incinerated nearly half of Hiroshima’s population. As is, we’re the Wright Bros building the first airplane with the A-bomb already bolted to the wings, when we should be focusing on flight. AI is evolutionary. It’s something to be excited about. But If we don’t steer it, there’s good reason to fear it.

ther and regulate AI like they should have regulated the atomic bomb — before one instantly incinerated nearly half of Hiroshima’s population. As is, we’re the Wright Bros building the first airplane with the A-bomb already bolted to the wings, when we should be focusing on flight. AI is evolutionary. It’s something to be excited about. But If we don’t steer it, there’s good reason to fear it.

Getting ahead of the next horror story before we’re scared straight may be as simple as temporarily limiting AI tech to healthcare, scientific development, and consumer protection. We don’t need it burning obscene amounts of energy writing school papers and replacing human jobs, relationships and creativity with glitchy, hollowed-out imitations.

Ultimately, this isn’t about what we’re willing to sacrifice for progress. This isn’t the wheel replacing horses. It’s Dr. Frankenstein assembling the next version of man from parts — without the internal guidance of a heart and soul. In the transfer of roles, we risk replacing parts of life that are core to the human experience. And, for what? Were families who lived and worked on a farm, telling stories by the fire, less happy than people who now scroll through cat clips and conspiracies on their wrist-watch?

But wait...one last time. What of superAI and humanity converging? Of man and machine integrated into one being with the best of both? It could put us cleanly on the evolutionary scale as partners. It has already begun with brain implants. But who gets them first? The privileged. And what do they do with it? Again, RULE. Unless us slow-running natives can establish self‑rule in time. But that machine is ticking away.

The alternative to action is surrendering to the idea of humans going the way of the dinosaurs and dodos. Perhaps we should just wish the next dominant species well and hope they do a better job of minimizing pain and suffering. That’s the magnitude of the choice we make now. Because most of this cautionary tale was about what might happen by the end of the next decade... Beyond that, at an accelerated pace, we won’t be to AI what apes are to us. To AI, we may be what cockroaches are to us. Intolerable.

Recommended Comments